How Automated Testing Helps Law Firms Maintain System Stability at Scale

Before you scroll past, think about this for a moment.

- When was the last time you tested how your system behaves during month-end billing, when everyone is logged in at the same time?

- If your practice management system slowed down or stopped tomorrow morning, how quickly would your team notice, and how long before it starts affecting clients?

- After your last upgrade, are you fully confident everything is working as expected across billing, reporting, and integrations, or are you relying on things looking fine on the surface?

Most firms do not think about these questions until something goes wrong. The ones that scale without disruption take a different approach. They build stability into their systems before issues begin to surface.

Law firms today rely on complex technology environments. Platforms like Elite 3E and ProLaw support everything from time tracking and billing to reporting and financial visibility, and these systems continue to evolve through customizations, integrations, and regular updates.

With every change, something can shift beneath the surface unnoticed. It may not be immediately visible, but over time, it begins to affect accuracy, timelines, and confidence in the system.

Firms that manage this well do not rely on assumptions. They take a structured approach to testing, automate validation where it matters, and ensure their systems continue to perform as expected as they grow.

That is where automated testing begins to play a critical role. To understand how firms are addressing this challenge, it helps to look at how testing itself is evolving.

Why Smart Firms Are Moving Beyond Manual Testing

Manual regression testing served law firms well for many years.

A dedicated IT team working through a structured checklist before each upgrade reflects a strong commitment to system quality. As firms scale, the opportunity shifts from maintaining this approach to improving it. Manual testing has clear limits. It cannot simulate 500 simultaneous users, and complex workflow variations require a level of coverage that manual processes struggle to achieve consistently.

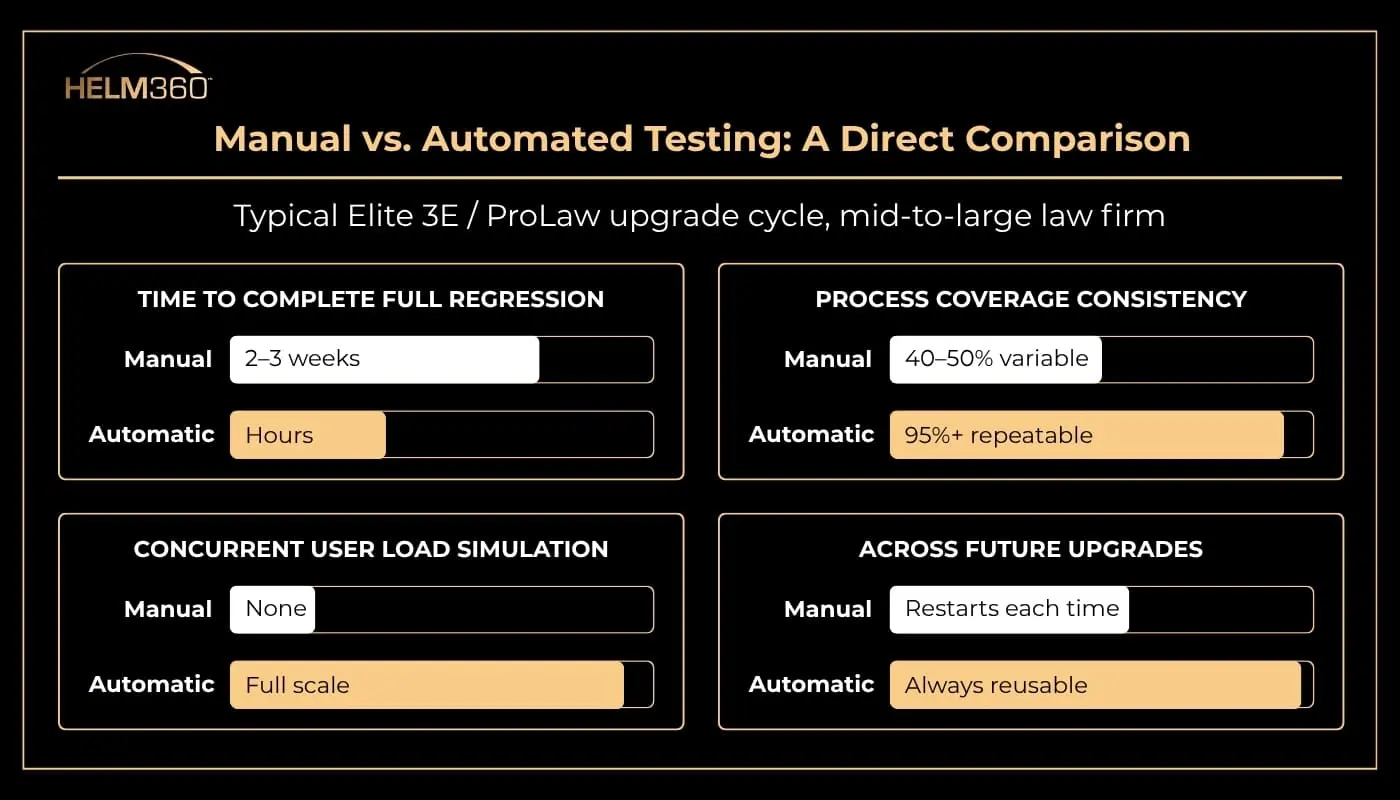

According to LawNext’s coverage of Helm360’s QA practice, full regression testing can take two to three weeks per upgrade cycle. This effort often depends on experienced team members, which makes the process time-intensive and difficult to scale. Automation returns that time in a predictable and repeatable way.

Bloomberg Law’s Legal Ops and Tech Survey puts legal technology spend growth at 48% over the past 12 months. As investment in legal technology rises, so does the value of ensuring that investment performs exactly as intended. The firms getting the most from their technology are the ones actively validating it.

Helm360’s Automated 3E Testing Tool collapses that two-to-three-week manual cycle into hours. Built on a library of over 1,000 battle-tested scripts developed across the world’s largest law firm deployments, it validates core processes such as time entry, billing, conflict checks, and financial reporting. Every run returns documented, screenshot-backed results that can be reused across future upgrades.

Prefer to hear how leading firms are approaching testing today?

The difference becomes clear when you compare manual and automated testing side by side.

The comparison highlights efficiency. The bigger impact shows up when firms start scaling.

Scalability Is the Bigger Story, Not Just Risk Reduction

Risk reduction often drives the initial conversation, but the real value of automated testing becomes clear as firms grow and systems are expected to handle peak demand without compromise.

Think about what growth actually looks like in practice. A merger brings in new users and workflows almost overnight. A migration from Elite Enterprise to Elite 3E introduces a new configuration layered over years of customisations. A firm expands from 80 to 500 attorneys and expects the system to maintain consistency at scale, the same way it did on day one.

Each of these moments introduces complexity. What matters is how well the system holds up when that complexity increases.

Automated testing gives firms a controlled way to prepare for that scale. Instead of waiting for performance issues to surface during critical periods, teams can validate how the system behaves under realistic conditions ahead of time.

This shift changes how firms approach growth:

- From reactive fixes during peak periods to proactive validation before they begin

- From limited test coverage to broad, repeatable validation across workflows

- From uncertainty at go-live to measured confidence backed by real test results

The most demanding periods, billing pushes, year-end close, and major firm events stop being risk points and become confirmation that the system is ready.

The Real Return on QA Investment

Automated testing is often viewed as a project cost. In practice, its impact extends across every phase of the upgrade cycle and continues well beyond go-live.

When testing is structured, repeatable, and well-documented, the entire delivery process becomes more controlled. Timelines are easier to manage. Teams spend less time rechecking the same workflows. Each release builds on the last instead of starting over.

The impact is immediate:

- Go-lives stay aligned with planned timelines

- Project teams close out work without extended testing cycles

- Implementation effort remains predictable across releases

The longer-term value shows up in daily operations.

- Billing accuracy improves because systems behave as expected

- Reporting becomes more reliable across teams and leadership

- Integrations continue to perform without disruption after changes

In a law firm environment, these are not small gains. They directly influence how quickly invoices go out, how confidently partners review financials, and how much trust teams place in the system they rely on every day.

What Good Testing Actually Covers

Firms sometimes assume automated testing means running the same basic checks faster. In practice, a well-built test suite covers considerably more ground:

- Functional validation – every core workflow behaves as configured after a change.

- Regression coverage – existing functionality remains intact after updates.

- Integration testing – connected systems such as finance platforms, document management, and reporting tools continue operating reliably after every change.

- Load and performance testing – the system operates reliably during high-load periods, not just single-user conditions.

- Data integrity checks – billing records, financial data, and reporting outputs are accurate before and after migration or upgrade.

Most firms test some of these. Few test all of them consistently. The gap between partial and comprehensive coverage is where most upgrade incidents originate.

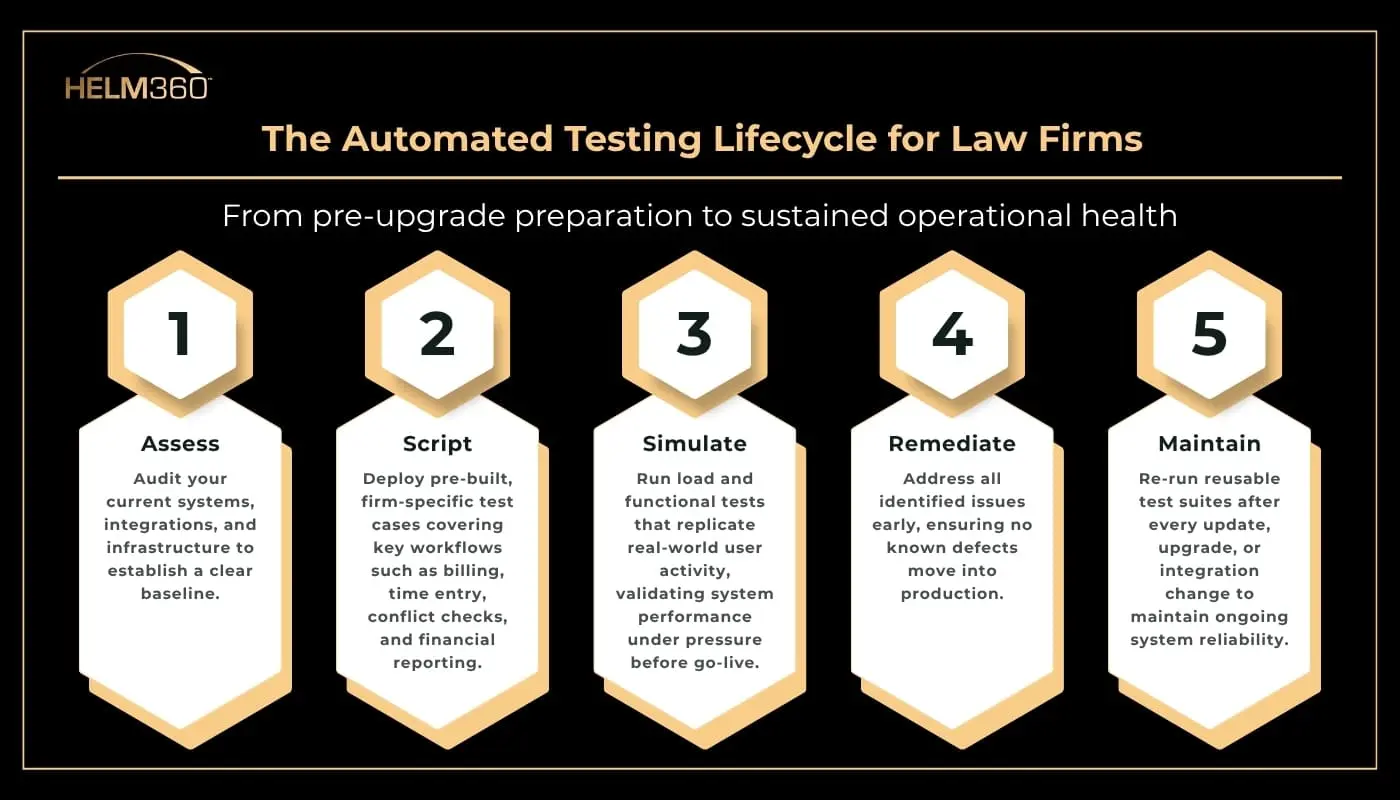

Understanding what thorough testing looks like is one part of the equation. The next step is building a process that delivers this level of validation consistently across every upgrade cycle.

That process typically follows a structured lifecycle.

Each cycle strengthens the next. Over time, firms build a validated, living record of system behaviour – one that becomes more valuable with every release.

What Operational Stability Actually Looks Like

For firms operating at scale, stability is not defined by the absence of issues. It is reflected in how consistently the system performs when the business demands most of it.

That consistency shows up in practical ways:

- Billing cycles complete on time, even during peak periods

- Partners access accurate financial data without delays or rework

- New offices and practice groups onboard without disruption

- Core workflows hold up under sustained demand

Firms running Elite 3E Cloud see this reinforced further through continuous updates that reduce disruption from major upgrades, along with automated workflows that help improve billing timelines and accuracy.

With ongoing support through Helm360’s Application Managed Services, this stability is maintained over time. Systems are monitored continuously, issues are identified early, and internal teams are able to stay focused on higher-value priorities rather than operational firefighting.

The firms that move ahead are not the ones avoiding change. They are the ones whose systems keep pace with their ambition, whatever scale that demands.

Where Firms Typically Fall Short

Even firms with the right platforms run into preventable issues when testing is not approached systematically. The challenge is rarely the software. It is how validation is executed, and four patterns come up consistently across firm sizes and geographies.

Testing covers only critical workflows. Edge cases go unvalidated because they seem unlikely. They are the first thing to fail when volume increases unexpectedly.

Load testing is skipped or deferred. Performance issues are invisible in a low-user environment. They appear during month-end billing, year-end close, or a high-attendance firm event, exactly when the firm can least afford them.

Validation is compressed into tight pre-launch timelines. When testing is rushed, defects get rationalised rather than resolved. The assumption is that issues will surface post-go-live and be fixed quickly. That assumption is often wrong.

Test coverage is rebuilt from scratch with every upgrade. Without reusable test suites, each release starts at zero. The cumulative cost in time, resources, and risk grows with every cycle.

These gaps do not announce themselves during normal operations. They emerge under pressure, at the worst possible moment.

Many of these gaps are not technical, they are operational.

Download the whitepaper to learn why digital transformation initiatives fail and how firms can address these challenges.

Your System Should Keep Up With Your Firm

Helm360’s legal technology experts help firms implement automated QA, performance testing, and managed services so every upgrade, merger, and growth phase runs with confidence.

Because firms that scale successfully are not the ones reacting to issues, they are the ones building stability into their systems from the start.